I wanted to publish this one last month, but there was a little confusion whether the bonus would be included in any of the node tables. That doesn’t seem to be the case, so unfortunately the calculator currently relies on a hard coded 10% bonus for payouts through zkSync starting October 2021. As always, latest version available at the github linked in the top post!

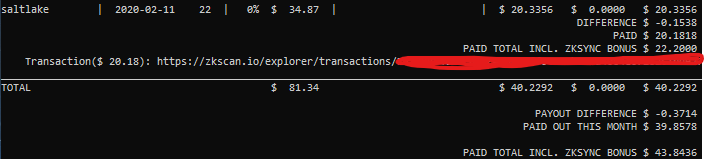

Here’s a sample of what that looks like (per satellite and totals):

Note: This system needs the receipt link to be available in the node databases in order to determine zkSync was used for payout, which at the moment requires a node restart for some reason (transaction link on the node dashboard is not available until restart). If it isn’t visible try restarting your node.

Changelog

v10.4.0 - Add zkSync bonus

- zkSync bonus has been added for nodes using zkSync for payouts for October 2021 and later