Not bad. I was running the benchmark on my windows machine as well but without spinning disks. I noticed the windows defender is consuming 60% of my CPU because it keeps scanning the million pieces. So there is room for improvements by excluding the path or something like that.

I have always deactivated defender from Windows Vista era. While the node is running the stats will tend to be lower than running this test on idle pc. I had a fun learning experience setting this up ![]() Thank you.

Thank you.

Just out of curiosity, did someone run the tool on a Ramdisk? ![]()

You can be the first one setting a record ![]()

Sure, using some DDR4-3200 CL16 (2x32GB):

uploaded 10000 pieces in 594.208814ms (996.16 MiB/s)

collected 10000 pieces in 126.386501ms (4683.46 MiB/s)

Then test with more pieces. The option is right there.

It would be interesting to test under RAM constrained scenarios, but I don’t have a node to test this. For what it’s worth, after running the new benchmark tool on my XFS HDD, the transfer to the disk is nearly an entirely sequential write operation, so I assume that would be the new bottleneck.

Anyone here with a Pi node?

Oh boy do I really have to repeat it over and over again. Run it yourself. You can argue all day. This discussion is pointless. Run it yourself!

Turns out Windows is a bit slow on our statfs call. We are now working on a fix for that. I would expect that we can more than double the performance for windows to make it almost as fast as linux nodes.

Any other “exotic” system or file system? There might be more file system specific issues like this one.

One of our developers is running a Pi3. So basically the slowest setup I can think of.

before

uploaded 10000 pieces in 9m18.728594725s (1.06 MiB/s)

after

uploaded 10000 pieces in 1m39.056623161s (5.98 MiB/s)

collected 10000 pieces in 4.304190402s (137.52 MiB/s)

Biggest problem for the Pi3 seems to be the rest of the code like verify orders and so on. The CPU takes too much time for that and can’t hit the HDD with maximum speed.

A Pi4 might have similar limitations. A Pi5 should work better. The CPU is not only faster. It also has a better command set that will need less CPU cycles for some calls.

So I tried testing with 1.2M pieces, which is about 70GB, exceeding my 64GB RAM size.

The piecestore process peaks at about 7GB used memory, and dirty memory peaks around 3GB. The disk, which is currently using Unraid parity, writes at about 40MB/s.

Unfortunately the process crashes and writes about 11GB to the disk. I have attached the output below:

panic: main.go:173: pieceexpirationdb: database is locked [recovered]

panic: main.go:173: pieceexpirationdb: database is locked [recovered]

panic: main.go:173: pieceexpirationdb: database is locked [recovered]

panic: main.go:173: pieceexpirationdb: database is locked

goroutine 251 [running]:

github.com/spacemonkeygo/monkit/v3.newSpan.func1(0x0)

/root/go/pkg/mod/github.com/spacemonkeygo/monkit/v3@v3.0.22/ctx.go:155 +0x2ee

panic({0x1804ac0?, 0xc0c3593290?})

/usr/lib/go-1.22/src/runtime/panic.go:770 +0x132

github.com/spacemonkeygo/monkit/v3.newSpan.func1(0x0)

/root/go/pkg/mod/github.com/spacemonkeygo/monkit/v3@v3.0.22/ctx.go:155 +0x2ee

panic({0x1804ac0?, 0xc0c3593290?})

/usr/lib/go-1.22/src/runtime/panic.go:770 +0x132

github.com/spacemonkeygo/monkit/v3.newSpan.func1(0x0)

/root/go/pkg/mod/github.com/spacemonkeygo/monkit/v3@v3.0.22/ctx.go:155 +0x2ee

panic({0x1804ac0?, 0xc0c3593290?})

/usr/lib/go-1.22/src/runtime/panic.go:770 +0x132

github.com/dsnet/try.e({0x1babf60?, 0xc0c3593170?})

/root/go/pkg/mod/github.com/dsnet/try@v0.0.3/try.go:206 +0x65

github.com/dsnet/try.E(...)

/root/go/pkg/mod/github.com/dsnet/try@v0.0.3/try.go:212

main.uploadPiece.func1({0x1bcff18, 0xc0f1935220})

/storj/cmd/tools/piecestore-benchmark/main.go:173 +0x14a

github.com/spacemonkeygo/monkit/v3/collect.CollectSpans.func1(0xc0004a2320?, 0xc0ce687ea0, 0xc17390f470, 0xc0ce687f38)

/root/go/pkg/mod/github.com/spacemonkeygo/monkit/v3@v3.0.22/collect/ctx.go:67 +0x9f

github.com/spacemonkeygo/monkit/v3/collect.CollectSpans({0x1bcff18, 0xc0f1935220}, 0xc0ce687f38)

/root/go/pkg/mod/github.com/spacemonkeygo/monkit/v3@v3.0.22/collect/ctx.go:68 +0x24e

main.uploadPiece({0x1bcff18, 0xc0f1935180}, 0xc000730000, 0xc01d0ffda0)

/storj/cmd/tools/piecestore-benchmark/main.go:170 +0x176

main.main.func1()

/storj/cmd/tools/piecestore-benchmark/main.go:209 +0x6c

created by main.main in goroutine 1

/storj/cmd/tools/piecestore-benchmark/main.go:207 +0x3ea

1.2M would take hours to run. Maybe start with something more reasonable like 10K and than increase it depending on how long it took.

That would be another candidate where the file system might make a difference. I would expect at least 100MB/s. If the end result keeps the same please zip the traces.json file and send it to us. That will tell us what the root cause is.

Or is your system CPU limited like the Pi3?

So I re-ran at 10k, 100k, and 200k pieces:

/home/piecestore-benchmark --pieces-to-upload 10000

uploaded 10000 pieces in 1.106661509s (534.88 MiB/s)

collected 10000 pieces in 963.438378ms (614.39 MiB/s)

# About 10 seconds of disk write activity, process usage peaks at 100M, dirty at 100M

/home/piecestore-benchmark --pieces-to-upload 100000

uploaded 100000 pieces in 2m49.644625591s (34.89 MiB/s)

collected 100000 pieces in 1m7.711359301s (87.42 MiB/s)

# About 4 minutes of disk write activity, process usage peaks at 2.3GB, dirty at 3GB

/home/piecestore-benchmark --pieces-to-upload 200000

uploaded 200000 pieces in 6m25.849221541s (30.68 MiB/s)

collected 200000 pieces in 1m28.576264704s (133.65 MiB/s)

# About 10 minutes of disk write activity, process usage peaks at 4.5GB, dirty at 3.4GB

At a certain point we are basically limited by the disk write speed as expected, and we don’t need a very large number of pieces compared to total RAM before this occurs. The dirty memory is capped to an extent.

In this case the disk I am testing (as other disks are running GC right now and I don’t want to interrupt), is basically 90% full. Unraid parity makes the sequential write essentially half the same disk without parity. With a simple dd write test I get 60MB/s sustained:

dd if=/dev/zero of=test.dat bs=1M count=1000 conv=fdatasync

1000+0 records in

1000+0 records out

1048576000 bytes (1.0 GB, 1000 MiB) copied, 17.4721 s, 60.0 MB/s

So the benchmarks report getting a little over 50% of the ideal sequential write speed limit.

With regards to the crashing, it occurs again at 400k pieces. I tried doubling db.max_open_conns to no avail. I could not find a traces.json file after the benchmark crashes and setting debug.trace-output to be some path does not work either. What am I missing here?

That’s about what I expected would happen on larger tests. 50% of sequential write speed is not bad, considering it’s working with lots of small files.

% piecestore-benchmark -pieces-to-upload 10000 --disable-sync

uploaded 10000 pieces in 8.054874535s (73.49 MiB/s)

collected 10000 pieces in 4.097536584s (144.46 MiB/s)

% piecestore-benchmark -pieces-to-upload 10000

uploaded 10000 pieces in 7.215732401s (82.03 MiB/s)

collected 10000 pieces in 4.049173572s (146.18 MiB/s)

BTW, on FreeBSD app complains that the file exists on subsequent invocations:

piecestore-benchmark -pieces-to-upload 10000

panic: main.go:75: open storage/trash/.trash-uses-day-dirs-indicator: file exists

goroutine 1 [running]:

github.com/dsnet/try.e({0x89daa0?, 0xc000357c80?})

/root/go/pkg/mod/github.com/dsnet/try@v0.0.3/try.go:206 +0x65

github.com/dsnet/try.E1[...](...)

/root/go/pkg/mod/github.com/dsnet/try@v0.0.3/try.go:220

main.CreateEndpoint({0x8c3f00, 0x27993a0}, 0xc000103f80, 0xc0004f8360)

/root/storj/cmd/tools/piecestore-benchmark/main.go:75 +0x1e8

main.main()

/root/storj/cmd/tools/piecestore-benchmark/main.go:199 +0x2a7

Just what @littleskunk says.

My benchmarks from a while ago took up to 50 hours per single run. That’s the price you pay for a thorough test. The relation of speed to number of pieces is not linear, and we need to be aware of that given some of the recent issues came up only on large nodes.

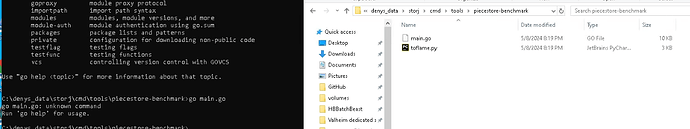

Follow this link to install GCC

Then install Go for Windows.

Is there a ready-made EXE file planned for Windows?

I’ll tell my fortune, is there a chance to resolve disputes about decreased performance on different file systems and virtualization?

If anyone has compared file systems/virtual servers with THIS tool, it will be very interesting to see the results.

I did but there is no point in posting that since you will continue to disbelieve it anyway. So the only way for you to stop bitching around is to run the test yourself.