My understanding was that TTL data would be just deleted without needing bloom filters so the effort will be fairly low ![]()

Also my understanding, as long as your databases are working.

The data we are currently uploading has a TTL. So in a couple of weeks it will expire and we just continue uploading. I can only repeat it over and over again. We don’t run this test for fun. There is an actual usecase behind it that we are trying to simulate. So the moment the deals are getting signed we will stop our uploads and allow the customers to utilize the network. Ideally our test run is so precise that you will not notice any change except that it will be coming from a different satellite.

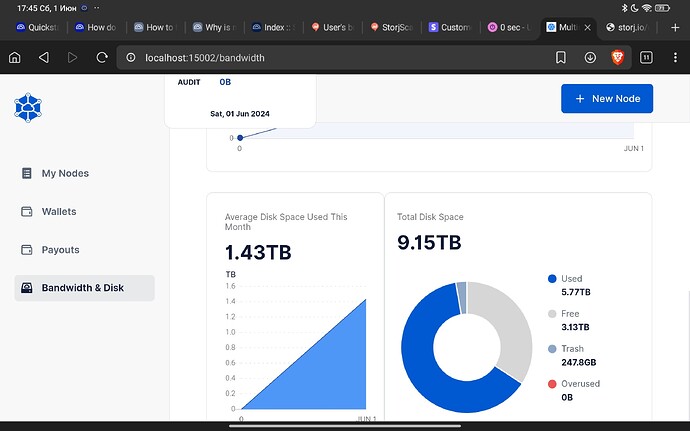

We are not there yet. Next week we have 1-2 alternative node selection that we want to compare to the current choice of 2 node selection. There still is room for improvements. So that will change the load we are all seeing on our nodes but I think by now it is fair to say if your node is overloaded you might want to fix that because if we are lucky we will have this load for several months.

I don’t believe you’ll continue uploading past the already maximum usage for saltlake. That means eating into the profit, which we (both storj + SNOs) are trying to avoid. So no, the maximum uploaded data is up to the past saltlake max.

If that gets hit, uploading test data will stop. Since you are tweaking things and uploads get faster, that means faster replacement (TTLed + new test data). Eventually one of the clients will sign and his/her/its use case test scenario will be done (so no new test data for that test). Faster replacement + fewer test scenarios = eventual exhaustion of new test data.

Just keep it running, and fix only when something is broken.

Perhaps it’s time to move out of Windows VMs?

Perhaps they are actually Gbits?

You don’t have to believe me. It will take a few more days to hit the size at which you expect the uploads to stop. That will prove my point. I believe with the current load we have to keep this running until the end of the month and given the current TTL that would make it an endless loop that never stops. Kind of exactly what we expect these customers to do.

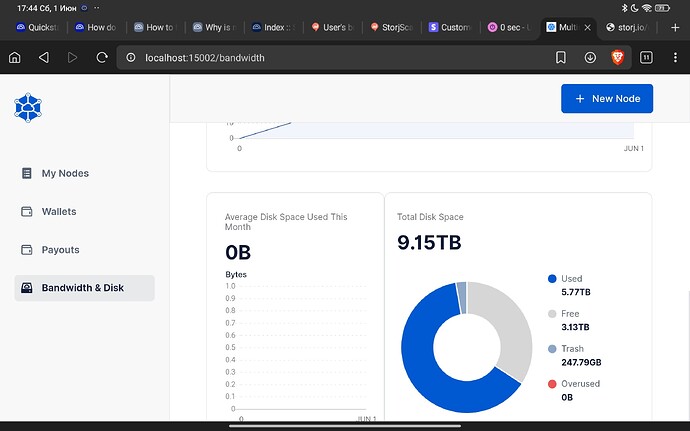

And why did we clean up so much space from the other satellites? You will soon find out that we are going to reserve a lot more space on SLC…

I’ll be happy to be proven wrong, but way happier to be proven right.

Happy either way? Sounds good to me. I join you on that one. The performance improvements will pay off either way. Currently the public network is 4 times faster than the storj select network. I enjoy it so much to tell everyone in the company “told ya” ![]()

(Most likely storj select just needs the same node selection but that comes with a different set of challenges that we will look into later)

Kind of expected comparing number of nodes… But yes, the companies would have a choice.

My point exactly. Testing needed to be done to improve things. We may disagree on the timing of the test (important update, big deletes, a lot of network traffic) pushing the nodes to their limits (and in case of everyone ignoring multiple advises on how to setup efficient nodes, way past their crashing point). I’m happy for the improvements.

More reserved space? Good, bigger payouts.

More clients? Good, organic growth and none of that parabolic to the moon crap which is a disaster for everyone involved.

Reserved up to the old saltlake max + lower usage by EU1 (used space has been dropping for the past 3 months) + higher usage from US1 (which should at some point balance EU1)? Good, we know where we stand with regards to expanding. Note: I don’t count AP1 since it’s very small.

That is the point, we should have a common setting need to tweak or recommend to tweak

Asking people to fix in some case could even make things worse since we don’t know what exactly we should do/changes

There is nothing to tweak. It must work with default settings and it works (confirmed by many - over 90% of a population accordingly stat that I saw).

If you have issues, you need to fix them.

- 1 node - 1 CPU core

- 1 node - 1 disk

- No VM

- I’m serious - NO VM! Do not need to argue with me, VM works bad, confirmed by everyone who run it in a VM.

- No proxy

- No VPN/proxy to circumvent /24 rule, include “free” Oracle VM with a wireguard/OpenVPN, if you have a public IP. Especially if you have a public IP.

Things seem to be running fine now (though nodes are definately using more memory: but that may be expected at the current data rates).

Re: tuning and tweaking: Logs have always pointed me to any actual problems. And in the rare cases logs didn’t help… it ended up being a hardware problem like dodgy RAM or a flakey PSU.

Operation Baked Potato continues! ![]()

hey, potatoes can work, just do not overload them. I like my tiny Pi3…

My Raspi (version 4 with 4GB ram) died with segmentation fault then it won’t boot and kept in loop of “root account locked”. Just 3 hours of downtime. It still is way faster than Windows node.