15% is on the higher side, but on top of regular bloom filters, it does not sound unreasonable.

Almost 2TB/IP trashed on my nodes.

Sweet!

I wonder where we are in the process of purging all the zombie data? Is it over now?

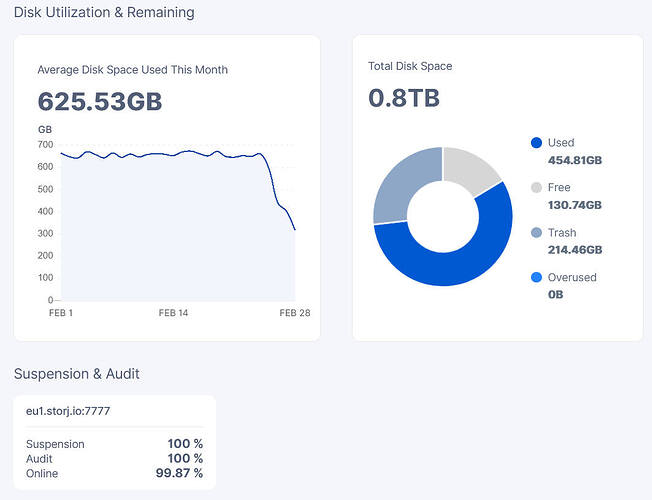

If my most recent daily satellite report is correct for Eu1 which shows just 316GB (and we all know how accurate the daily reports are haha) It looks like my node might trash more data soon (assuming there might be a lag in bloom filter generation). If my actual used space drops to the number on the report, I will have lost about 50% of the data. Time will tell.

Why not turn on the debug port and look at the metrics ?

I have 58TB in trash, at least 300 tb left

I’m not even sure what I would be looking for inside the debug port metrics. Could you be more specific?

Have you ever calculated what percent of the entire network you are storing?

For me, the “blobs_usage” metric in the debug port looks like the same number that I’m seeing in my dashboards used space near the pie chart.

Dashboard=452.99GB

debug port=4.52993728768e+11

/api/sno/ = 452993728768

So I’m thinking the number in the debug port is based on my nodes local data and not what the satellite is saying about my node.

Why I need this, it is useless info.

I just think it’s interesting. I once estimated that you store 0.5% of all the data on the network based on the “storage_total_bytes_after_expansion” for each of the satellites from https://stats.storjshare.io/data.json

You knowing this guy? ![]()

http://www.th3van.dk

Are we being untrashed… again? Too much growth in the last hour for it to be real uploads.

Yeah, looks like it. All my nodes except for my one hashstore node is showing zero trash now.

Untrashed again? ![]()

Why this yoyo dance with data? Is it testing? Or again some bug?

I don’t mind: they need to be super careful with customer data. And if anything looks fishy… they only have that one-week window to ask for it back.

(And although you never want to test-in-Prod… it must be satisfying to see the trash-recovery system working as designed on 20000+ nodes! ![]() )

)

But it also proofs the delete process is not working as designed… ![]()