Hashstore is still under development and you will not be migrated. With version 120 you CAN start a migration if you WANT to try this new feature.

I can’t remember if this was answered:

- If I have badger cache enabled and I want to migrate to hashstore, how should I deal with it?

A. Should I let badger enabled untill migration completes and than turn badger off? How do I know the migration finished?

B. Should I turn off badger and than start the migration? If so, than should I turn off startup piece scan too? Or turn off badger, run a new startup piece scan, than enable migration?

What about lazzy mode and startup piece scan? Are they interfering with migration? Should both be kept off?

When migration is finished blobs folder contains only empty directories but no piece files.

This is completely up on you. These features are completely independent. However, if you migrated to hashstore, there is no point to use a badger cache, the difference will be miniscule.

However, during migration, it may help to handle the current load.

They can, but not so much. However, we didn’t test exactly this case.

What’s false will not be applied, what’s true will be applied.

However, the meaning that what’s you will enable with true will be processed.

Also, It would be applied after a node restart.

I collected some data regarding the trash overhead. For a growing node it is up to 25%. The idea here is to spend more IOPs on storing data and less IOPs on cleaning up the garbage. Only clean up LOG files that have enough trash in it. But this behavior changes the moment the node is full. At that point we don’t need to preserve IOPs for incoming uploads and instead can use it to clean up more trash. The way this currently works is by compacting at least the LOG file with the highest trash amount even if it is below the 25% threshold. That compact call should take just a few minutes. All we need to do is call it 18000 times in a row and we have a 18TB node with almost no trash overhead. Well it is a bit more complicated because the trash that was removed will get replaced with new uploads and they have a high chance to cause new garbage. Maybe think about this more like an gradiant that starts the moment the node is full end eventually hits 0% trash.

This logic needs a bit more fine tuning. I can see in my data that my smaller test nodes have indeed 0-2% trash. I believe for bigger nodes the compact interval needs to be smaller. My understanding is with the current code the node would stay full for a day or so before calling compact the next time. We can either increase the batch size to lets say 1000 LOG files (might be a bit too much) and get to a low trash amount in a few weeks or call the single compact more frequent like once per minute or so. Well first I need to get my node full so I guess this question can wait a longer time until it might affect my nodes.

This thought isn’t about Hashstore: just in general. But even if most data going to a node is temporary… some of it must be long-term/permanent? If so… then older nodes should slowly build up layer after layer of that long-term data, like sediment? They should age like fine wine? ![]()

At some point I could see a SNO even wanting to protect an old full node (like with some parity)… simply because that long-term data is so valuable and hard to replace.

Alas, not a problem most of us have… just talking out loud…

I believe the other frequent asked question is how to move the hashtable to a SSD. Currently there is no option for that. The reason for that is that we don’t know if it is worth the risk. At the moment hashstore with the hastable on disk is faster than piecestore + badger cache. We tested hashstore with hashtable on SSD and it would give us a small performance gain. At least for storj select with the high stream of short TTL data. Storj select could benefit from moving the hashtable to SSD. But there are 2 points to consider.

-

Losing the badger cache is no big deal. The node can run with an empty badger cache and will slowly recreate it. Losing the hashtable is a ticket to get disqualified. Rebuilding the hashtable from scratch would require to read all LOG files (not implemented). Reading 20 TB of data will take a long time and in the meantime the node will have to stay offline to avoid audit failures. It might be possible to avoid the disqualification for a low number of nodes but even than we are still talking about weeks of downtime, getting suspended, reduction in used space caused by the repair system and so on. I would argue that this risk is not worth it. For bigger setups with multiple HDDs sharing the same SSD it gets unlikely to rescue all nodes in time.

-

For a public node this extra performance might be meaningless. Storj select has to go down the throughput rabit hole. How much concurrent uploads can the customer push to a single node. Moving the hashtable to a SSD means a few more free IOPs on the HDD. For the public network this equasion is a bit different. A public node needs to optimize for a high upload success rate. There is a chance that moving the hashtable to a SSD has no measureable difference in upload success rate. In that case we would be talking about taking an unessesary risk. Please keep that in mind.

My plan is to wait how things develop in the storj select network. If the option to move the hashtable to a SSD gets implemented I might test it out on a node to see if there is a measureable difference in upload success rate. If there is almost no difference I will move the hashtable back to HDD. Why take an avoidable risk if there is no advantage to gain?

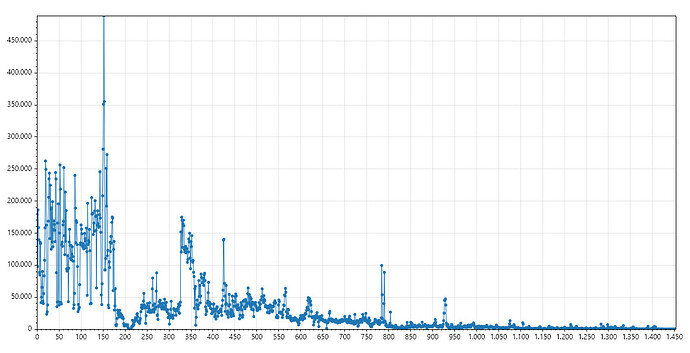

This is a file age histogram of my oldest node, almost 4 years but still not full. The average file age is 276 days.

Having the hashtable location adjustable is not only about performance. We could also use a storage with redundancy for hashtables to avoid the loss of the entire node just because of a single file error.

I would consider this question from the opposite position: losing a single file, or a bunch of files in filestore, or a database file brings negligible risk, and hence all of that can operate on single drives with badblocks. Whatever. However losing a hash table is a huge risk that might lead to immediate disqualification even with a single fault, therefore I’d like to explicitly store it on a RAID1, or maybe even RAID1c3.

hashstore scans the occupied space?

Previously, if you delete/damage database files, you had to wait until the disk was scanned and the indicators were updated, but now there is no scanning?

Is there any way to force scanning?

Even if hashstore keeps used-space-filewalker… it’s going to be counting 1000 logs-per-TB… instead of 3-4million .sj1’s-per-TB. So it should still be dramatically quicker.

Shouldn’t it?

In my experience keeping hashtables on HDD is more risky than on SSD. I’ve lost one hashtable somewhere almost at the beginning of data migration on one node because it was written to an area with bad block. And now I’m watching how Audit score crawls down to 96% and wondering if I’m gonna win the lottery or not.

While whole large HDD with log files isn’t worth redundancy, relatively small hashtables definitely worth it. That’s why I’m moving them to RAID1 SSDs now.

This huge options list for docker do the job

--mount type=bind,source="[path to SSD]/hashstore/1wFTAgs9DP5RSnCqKV1eLf6N9wtk4EAtmN5DpSxcs8EjT69tGE/s0/meta/",destination=/app/config/storage/hashstore/1wFTAgs9DP5RSnCqKV1eLf6N9wtk4EAtmN5DpSxcs8EjT69tGE/s0/meta/ --mount type=bind,source="[path to SSD]/hashstore/1wFTAgs9DP5RSnCqKV1eLf6N9wtk4EAtmN5DpSxcs8EjT69tGE/s1/meta/",destination=/app/config/storage/hashstore/1wFTAgs9DP5RSnCqKV1eLf6N9wtk4EAtmN5DpSxcs8EjT69tGE/s1/meta/ --mount type=bind,source="[path to SSD]/hashstore/12EayRS2V1kEsWESU9QMRseFhdxYxKicsiFmxrsLZHeLUtdps3S/s0/meta/",destination=/app/config/storage/hashstore/12EayRS2V1kEsWESU9QMRseFhdxYxKicsiFmxrsLZHeLUtdps3S/s0/meta/ --mount type=bind,source="[path to SSD]/hashstore/12EayRS2V1kEsWESU9QMRseFhdxYxKicsiFmxrsLZHeLUtdps3S/s1/meta/",destination=/app/config/storage/hashstore/12EayRS2V1kEsWESU9QMRseFhdxYxKicsiFmxrsLZHeLUtdps3S/s1/meta/ --mount type=bind,source="[path to SSD]/hashstore/12L9ZFwhzVpuEKMUNUqkaTLGzwY9G24tbiigLiXpmZWKwmcNDDs/s0/meta/",destination=/app/config/storage/hashstore/12L9ZFwhzVpuEKMUNUqkaTLGzwY9G24tbiigLiXpmZWKwmcNDDs/s0/meta/ --mount type=bind,source="[path to SSD]/hashstore/12L9ZFwhzVpuEKMUNUqkaTLGzwY9G24tbiigLiXpmZWKwmcNDDs/s1/meta/",destination=/app/config/storage/hashstore/12L9ZFwhzVpuEKMUNUqkaTLGzwY9G24tbiigLiXpmZWKwmcNDDs/s1/meta/ --mount type=bind,source="[path to SSD]/hashstore/121RTSDpyNZVcEU84Ticf2L1ntiuUimbWgfATz21tuvgk3vzoA6/s0/meta/",destination=/app/config/storage/hashstore/121RTSDpyNZVcEU84Ticf2L1ntiuUimbWgfATz21tuvgk3vzoA6/s0/meta/ --mount type=bind,source="[path to SSD]/hashstore/121RTSDpyNZVcEU84Ticf2L1ntiuUimbWgfATz21tuvgk3vzoA6/s1/meta/",destination=/app/config/storage/hashstore/121RTSDpyNZVcEU84Ticf2L1ntiuUimbWgfATz21tuvgk3vzoA6/s1/meta/

Why scanning all logs for restoring the hashtable would require a long time for a 20TB drive? I thought the hashstore accelerates dramaticaly the piece read and write?

If a 20TB drive of millions of piecefiles would take a week on a high RAM system, why should reading thousands of log files take weeks?

In order to restore a hashtable we need to go through those logfiles and reading all piece headers. So it is again millions. I guess the limit is sequential HDD read speed.

Yes, but there is way little file system access, so it’s just reading big files. With piecestore, you access the fs for each piece also.

Given todays HDD speed I guess it could be done in 2 days for a full 20TB HDD.

Why don’t you allocate less space. It will make your nodes full in no time.

I wonder:

- how hashstore and compaction deals with underallocation?

- on a full node, how big will be the overhead?

- could it pass below the 5 GB free space limit and crash the node?

There might be a missunderstanding. I am sure my node will end up with a low trash amount. My data is showing 1-2% trash is possible. I just shared my decision. Getting my node full will take at least 6 months more likely 12 months. Thats enough time for me to not worry about smaller details. All I need to know for now is that the compact job can compact LOG files with less than 25% trash in it. You can look it up yourself if you like: https://review.dev.storj.io/c/storj/storj/+/15748