With all respect, are you sure? And the vetting constraint?

I’m getting a LOT more than I would have expect behind just one IP…

How much traffic are you getting now? My single node currently gets around 240mbps.

Could it not just be that choice of 6 underutilizes slower nodes over time?

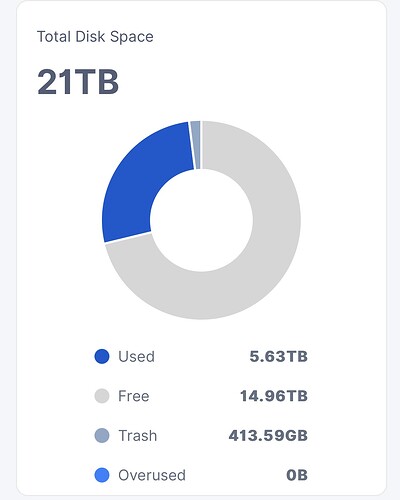

This is my overview of todays tests so far. But shortly after the largest peaks 4 out of 6 IP’s filled up all space. So I’m now working with only 2 with free space.

SSD Cache is also saturated again. Doesn’t seem to make me fail many races though. System seems to quite easily manage, though some things in the DSM web UI are getting a little slow. Nothing I can’t handle though.

So, k=20000. Close enough ![]()

I tell you, automate this with hyperopt (or a similar tool).

Sth like:

if !limit.PieceExpiration.IsZero() { time.Sleep(30 * time.Seconds) }

put in the right place in the code will do. It will affect the number of non-TTL uploads though, and you’ll be breaking the T&C.

Pretty easy to check if you know what to look for.

I kind of think they’ll put the results into marketing resources soon anyway.

Final test for today is the lowest long tail I feel comfortable with 16/20/30/33

Hopefully choiceofn with n= 6 can remove enough bad nodes to make this work. It might be a bit too aggressive.

Maybe a report on Medium or some blog site, to promote Storj more.

keep in mind the incubated mini-nodes?

“Incubator” nodes are vetted, small and full. Those shouldn’t really make a difference.

Damn… so huge ingress on my 6 month old node, sharing the same IP with my 3.5 years old node that quited SL sat. The old guy has 8.5TB down from 14.5, but the new guy recovered all the loses with these tests.

I have to prepare for new upgrades next year, if this trend continuus.

Unvetted nodes are probably faster than average. I mean we are talking about patato nodes all the time. Also disks are not yet fragmented and not much filewalkers running. So no surprice to me.

You are using that term too often. How about you just tell us what to change next? ![]()

Dial the speed selector up to 11. That should make us sweat ;))

full like my 10TB node freed up half its space? or full again?

16/20/30/33 was too low. I could see on my own node that it was more bumpy as if every now and than it hits more than 3 slower nodes and can’t get to maximum throughput. Well now we know.

Lets try this again with 16/20/30/35 to see if 2 more nodes are enough.

Well, the typical “incubator node” is 500GB, has been running for a few months and is now full. The idea of them is to have a few small nodes which have been pre-vetted so that when your main nodes are getting full you just transfer those small ones across onto a large HDD and that way you don’t have to wait for the whole vetting process to happen.

what if there are many new, unvetted, and half full of them?

TL;DR: because unvetted nodes belong mostly to serious SNOs with serious networks.

with IP limit OFF, they suddenly joined and inflate the test result.

probbaly because only significant SNOs, who already have xx or xxx nodes vetted from long time, does incubate spare nodes, just in case. Those operators has serious equipment and networks, this, with combination of net /24 ip rule suspended, uncovered theirs network potential, and that great majority of unvetted is connected to good networks. And that, the only biggest players think about making new nodes, to secure theirs existing operation or to expand. If only as a spare nodes - then the question is why they worry? Not so easy to loose a node, You have to be offline 30 days. Sure, You run say: 200 nodes, and You loose some, You want to have spare nodes. But if You have 200 and You run 200 spare with minimal HDD space, i would question that. For me its proooobably because those xx or xxx or maybe xxxx nodes are run from same location, just on different ip.

Thats why they are so afraid if something happens, all nodes are cooked, so have to run a lot of spare as well. To me, it says that no new nodes from outsiders. i conclude pay rate isn’t encouraging. Not an offtopic, just Another argument for if You want more space to be added, and importantly, from new people, NEW ppl to join, maybe You could consider to share with us, SNOs a little bit more. if You face need of rapid growth, isn’t it better to think about it earlier? There, my stance sits still, a 2,5$/TB/storage is fair! And how wonderful! You can use results of this test to conclude how to adjust the reed solomon to meet the numbers, so everyone are happy! Long live the Storj!

so You just discovered most of unvetted nodes are spare parts for most serious SNOs, mostly with Online nodes circumventing Your net /24 rule. (or they couldn’t be doing this seriously) Now If You have a lot of Nodes with same IP, but small HDD, now, most likely You got real IP of those guys. Or at least main IP. Without VPN. No one sane would keep 10-20+ nodes on same IP, even 3-4 neighbors gives no gains, i got such 1 node, but i do nothing yet, but i will.

If You do xx-xxxx nodes = You do it for profit, and seriously. And You have to have 1 IP per 1 HDD or it makes no sense. Not sure if i want add more atm, tho i will play pangolin with “no surprise to me”. And in case what to change? idk depends if thats realy a problem, from throughput stance of course. Other than throughput i dont wanna start offtopic, and that’s where I’ll stop.

Ok looks like we need to stay at 16/20/30/38 because anything lower than that is getting too slow.

We are finishing our tests for today and will run it over night on a lower total throughput.