So, I looked at tcpdump. And what do you know, when Kuma cannot ping, tcpdump does not receive anything. And yet, internet on the node works, it receives and sends traffic.

So, then I looked at /var/log/messages… And what do I see?

Cosmic disgrace, that's what

Mar 12 17:34:51 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 17:39:00 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 17:39:00 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 17:39:00 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 18:09:51 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 18:09:51 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 18:09:51 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 18:11:55 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 12 18:12:11 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 18:14:15 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.193.135.243

Mar 12 18:14:29 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 18:18:36 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 12 18:18:46 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 18:20:51 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.193.135.243

Mar 12 18:21:01 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 18:43:35 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 12 18:43:39 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 18:45:44 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.193.135.243

Mar 12 18:45:52 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 19:20:54 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 19:20:54 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 19:20:55 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 19:43:35 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 19:43:35 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 19:43:36 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 19:56:00 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 19:56:00 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 19:56:00 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 20:41:15 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 12 20:43:25 storagenode inadyn[23648]: Update forced for alias node-1.arrogantrabbit.com, new IP# 104.193.135.243

Mar 12 20:43:33 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 21:47:37 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 21:47:37 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 21:47:37 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 22:28:55 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 22:28:55 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 22:28:55 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 12 22:43:13 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 12 22:43:25 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 22:45:29 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.193.135.243

Mar 12 22:45:37 storagenode inadyn[23648]: Updating IPv4 cache for node-1.arrogantrabbit.com

Mar 12 23:22:46 storagenode inadyn[23648]: Communication with checkip server 1.1.1.1 failed, run again with 'inadyn -l debug' if problem persists

Mar 12 23:22:46 storagenode inadyn[23648]: Retrying with built-in 'default', http://ifconfig.me/ip ...

Mar 12 23:22:46 storagenode inadyn[23648]: Please note, https://1.1.1.1/cdn-cgi/trace seems unstable, consider overriding it inyour configuration with 'checkip-server = default'

Mar 13 00:45:08 storagenode inadyn[23648]: Update needed for alias node-1.arrogantrabbit.com, new IP# 104.18.0.0

Mar 13 00:47:12 storagenode inadyn[23648]: Received status 524, don't know what that means.

Mar 13 00:47:12 storagenode inadyn[23648]: Zone 'arrogantrabbit.com' not found.

Mar 13 00:47:12 storagenode inadyn[23648]: Error response from DDNS server, exiting!

Mar 13 00:47:12 storagenode inadyn[23648]: Error code 48: DDNS server response not OK

What the hell is 104.18.0.0?

Turns out, inadyn was using Cloudflare’s https://1.1.1.1/cdn-cgi/trace , which intermittently returned 104.18.0.0 (a Cloudflare network address) instead of the my public IP. Inadyn accepted that value and pushed it to the A record, then later corrected it when fallback (ifconfig.me ) returned the real IP, causing repeated flips between valid and bogus addresses.

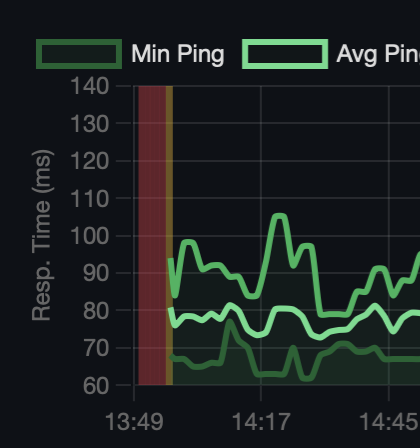

Apparently, storj caches the ip at some other cadence that I guess was luckily coincident enough (or maybe it disregards bad updates that don’t have a node on the other side – smart!) – this explains why node kept working. And my probe monitors were using FQDNS that was flipping like a turd in the wind.

Forcing a stable checkip provider (e.g. checkip-server = ifconfig.me or default ) and clearing inadyn cache eliminates the issue.

Mystery solved.

Big thanks to @mike